Challenge

While a plethora of information on changes made to a database is recorded in transaction log files, SQL Server transaction log reading has always been a big challenge for all SQL Server users since Microsoft never offered a built-in solution which would allow users to immerse themselves and explore the depths of transaction log files. Furthermore, lack of in-house features that would help understand, process, or analyze information within the transaction log files practically made it mandatory for users to turn towards 3rd party solutions.

In this article, the most important SQL Server transaction log reading features and requirements will be discussed, which will help find and pick the best solution for requirements at hand as well as offer powerful overall auditing and recovery capabilities.

Data sources

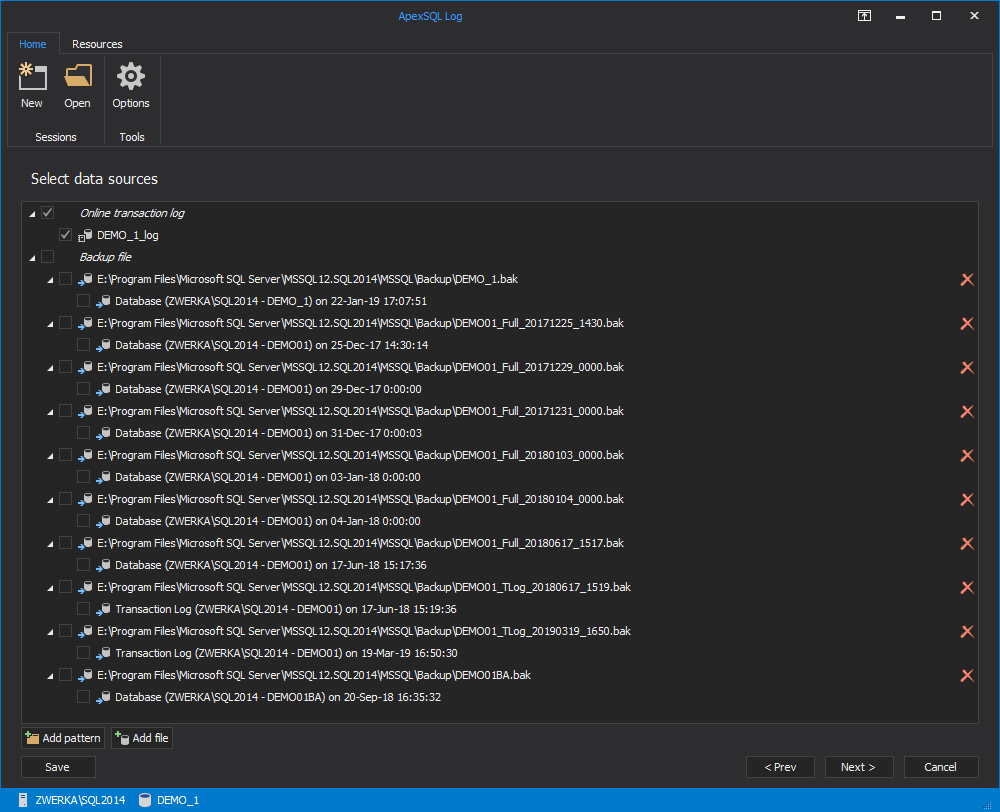

When SQL Server transaction log reading is considered, it is important to first and foremost understand that there are different types of transaction log files which can be used as an auditing data source:

- Online transaction log (LDF) – currently active transaction log files which store latest database changes

- Transaction log and full database backups – backups of ‘old’ transaction log files which are most often created automatically on a pre-determined schedule

- Detached transaction log – online log files which can be detached from the SQL Server for the purpose of migration or similar

3rd party tool solution

With the above in mind, finding the tool that will be able to handle all transaction log types and produce maximum results is a prime pick. ApexSQL Log, a SQL Server transaction log reading tool, can handle all the above-mentioned transaction log types. As long as *some* transaction log files are available, auditing will be possible, and so will be other related features based on gathering and processing transaction log data information.

The most important take from this is that the nature of SQL Server transaction log reading allows users two separate types of auditing:

- On-demand and continuous auditing – where auditing can be performed on a regular basis and audited data stored in various output formats, including SQL database, SQL script, XML, HTML and more

- Forensic auditing – which is ideal for post-disaster or ‘point in time’ auditing jobs when there is a requirement to audit operations which occurred sometime in the past, including the time before the auditing tool was even introduced in the production environment

What is audited

SQL Server transaction log reading should always yield results rich in information on audited events.

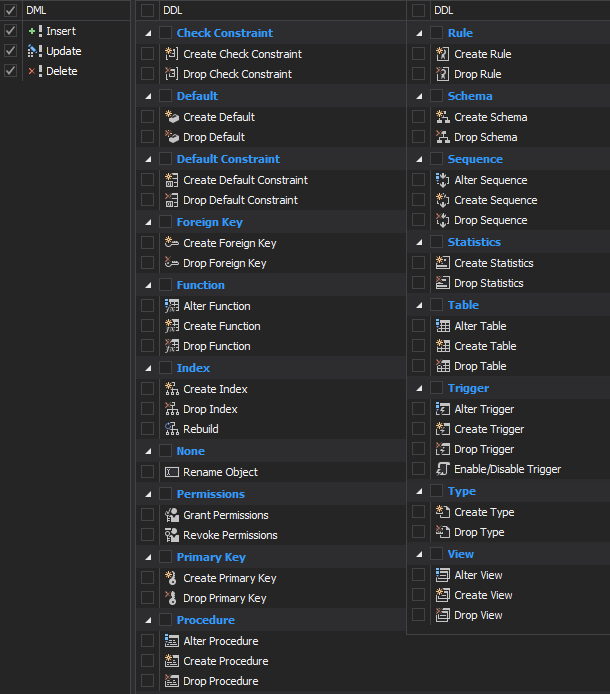

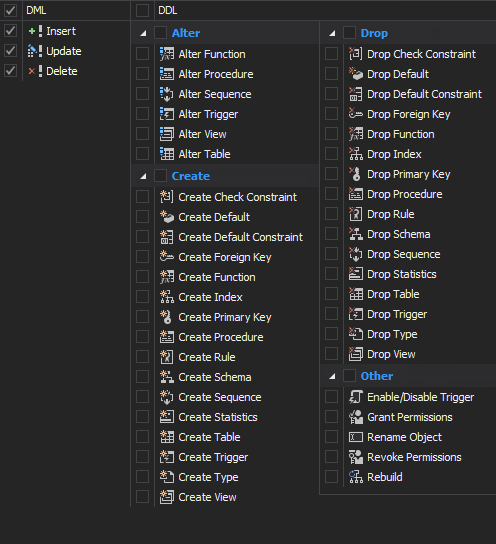

First and foremost, it is important to ensure that all DML and DDL operations are audited. This includes:

- DML: Insert, Update, Delete

- DDL: Create, Alter, Drop, Other

As well as being able to extract these operations over various objects, such as: Tables, Views, Schemas, Procedures, Triggers, Primary keys, Foreign keys, Indexes, Permissions, Functions, Sequences, Defaults, Check constraints, Rules, Statistic and more:

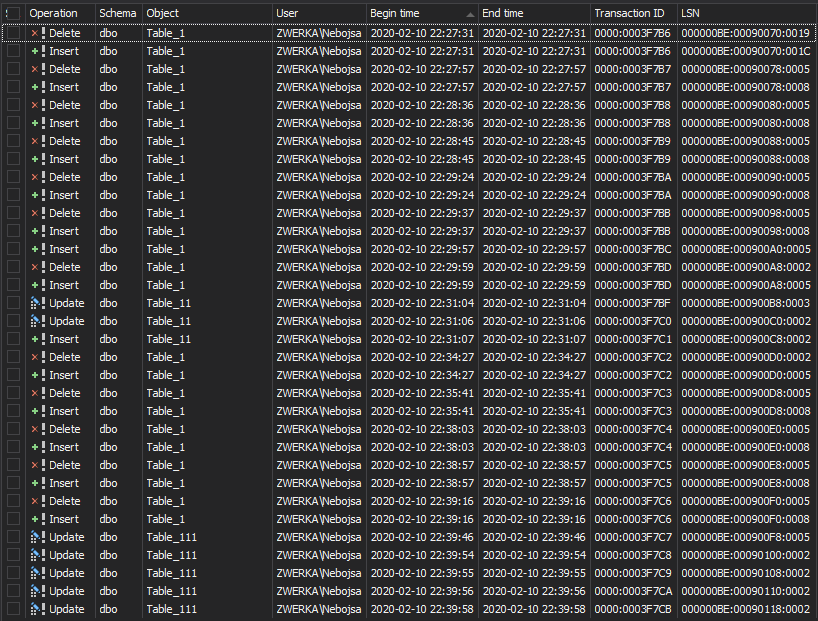

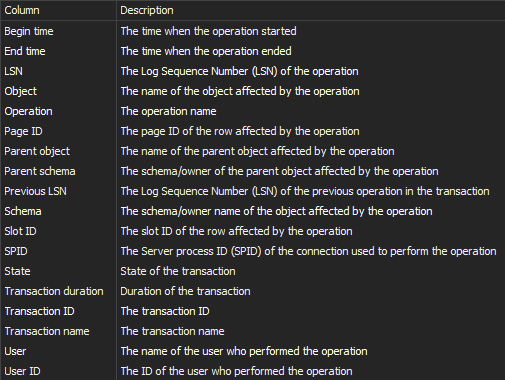

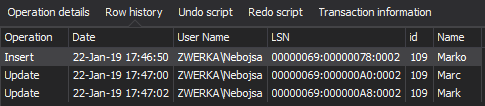

Next, once SQL Server transaction log reading is performed, the auditing tool should be able to extract various information on transactions and operations (from the t-log files), including the name and ID of the user that performed the operation, operation state, schema, time, LSN and more. Here is an example of standard information shown in the previously mentioned ApexSQL Log:

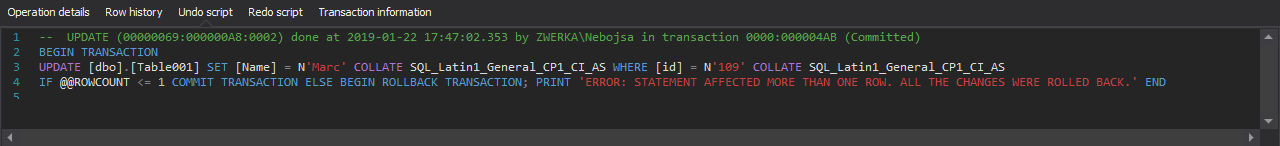

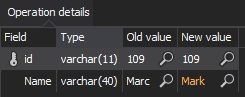

In a similar manner, various operation details and important information can be seen on each audited event/operation:

-

Operation details – including exact information on ‘what has been changed’ – for example – details on what was changed by the DML operation. An important extension here is the auditing of exact field values on update/delete operations – auditing the field values before the change and after the change is an important feature for any SQL Server transaction log reading solution since it not only allows users to see the original (and new) state of the data in the table field but also sets foundations for manual and automatic recovery as well:

-

Row history – complete historical information on the specified row, from the initial insert, all the way to the final update/delete

-

Individual scripts to roll the operation back/forward

A comprehensive overview of audited data

Any data audited via any type of tool or tool brand is as good as its ability to quickly and in an easy way display the results to the user and allow them to analyze and process it without much effort.

If the attention is moved to the Grid overview in ApexSQL Log, it shows a great example of displaying a vast amount of information in a comprehensive and easy to use grid where each operation/event can be individually analyzed and investigated:

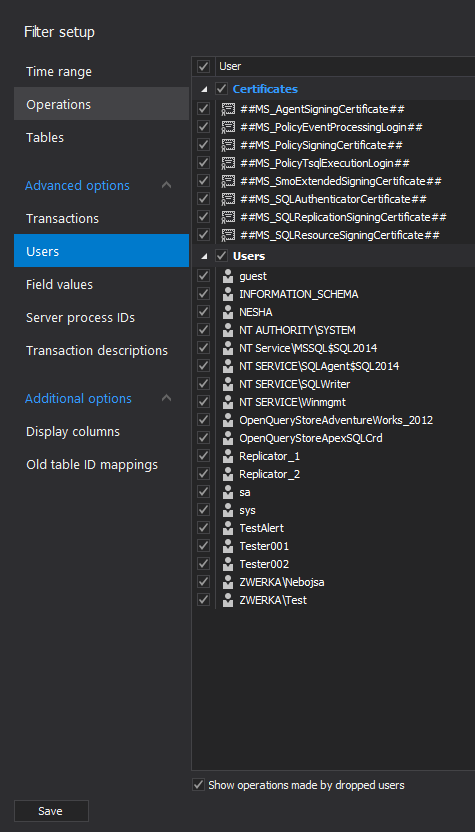

Furthermore, when dealing with vast amounts of audited data, having at hand many different filters that can be used to narrow down the results is a powerful tool for any analyst and DBA as well. SQL Server transaction log reading is way easier when tools allow filtering by operation, object, users, transactions, table field values, Server process IDs, Transaction descriptions, and more:

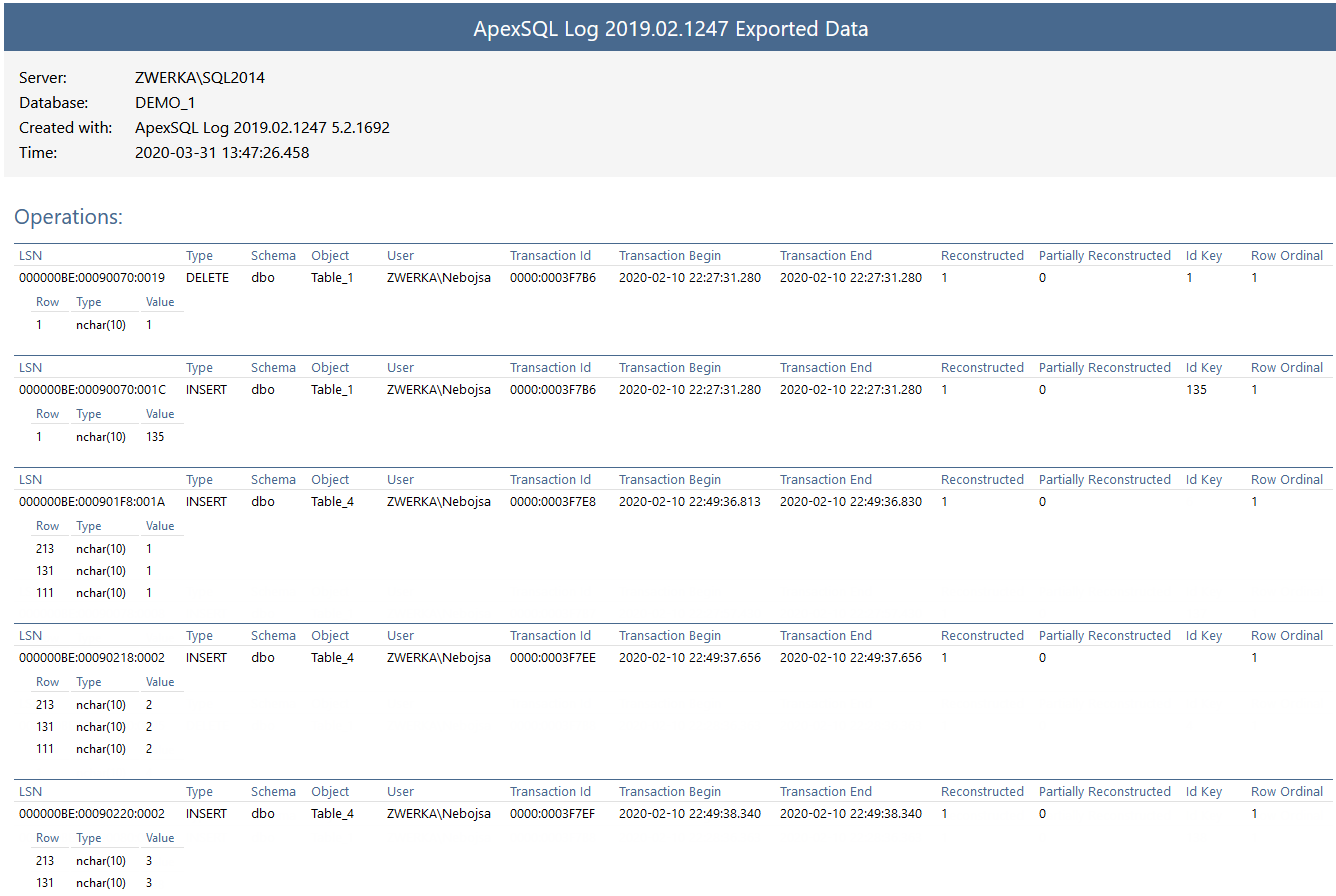

Reporting and saving/exporting audited data

Analyzing data gathered using SQL Server transaction log reading is very important for immediate insight into ‘who changed what’ in the SQL Server databases. Reporting, on the other hand, must allow different users to comply with auditing requirements to create comprehensive and highly focused reports in various formats. ApexSQL Log, for example, allows reports exporting into various formats, including SQL script or SQL bulk files, XML, HTML, CSV, or directly into an existing SQL Server database. Furthermore, different auditing/reporting jobs can be run to focus on only specific events, users, or time frames using different filters mentioned above:

Remote auditing

Many production environments span across a large number of servers, which may host dozens or even hundreds of individual SQL Servers. For these environments, it is particularly important to ensure that auditing can be centralized from the main GUI, and all remotely located SQL Server instances can be accessed and audited remotely with minimal intrusion.

In this example, ApexSQL Log utilizes a simple Windows service called ‘server-side components’, which allows the tool to remotely access SQL Server and all related log files after installing it in the production. No components go on the SQL Server itself, so production intrusion is kept at the bare minimum.

Continuous (unattended) auditing, automation and Command line support

Another quality of life feature in high demand is the tool’s ability to allow unattended continuous auditing. This means that the auditing will run on a pre-determined frequency (e.g., every 10 minutes) and generate auditing output as per configured details. Also, since many different transaction log backups may be included in the job, it is important to ensure that no data is missed, as well as there are no duplicate data entries to ensure a high-quality auditing trail, which is easy to work with. ApexSQL Log fully supports command line interface, and all options available in the GUI are available in full in CLI as well – and these are the strong foundations for any automation using PowerShell or batch files. To further simplify this, ApexSQL Log allows users to create their auditing configuration in GUI and then automatically creates PowerShell/batch files with all corresponding CLI switches for all GUI options.

Bonus features

Now that we’ve looked at those prime features to aim at when eyeing SQL Server transaction log reading solution, let’s look at some of the additional features which can tilt the scale and allow users to opt for the best potential solution which plethora of features for auditing, and for a couple of additional key areas which open up after digging deep into the secrets of transaction log files.

Disaster recovery

Yes! Disaster recovery by reading from transaction log files is indeed possible, and with a simple effort to add. ApexSQL Log demonstrates and allows recovery from malicious or inadvertent changes by first reading the operation information from the transaction logs file, and then allows users to create “undo” or “rollback” scripts which reverse the operation back and bring the data or structure back to the original state. E.g., if a set of data is deleted, ApexSQL Log will undo this by inserting the data back to the table. Same for updates – table fields that were updated to a new value can be again updated to the ‘old’ value, reverting the state of data (or structure in some cases) back to how it was.

Replication

High demand for replicating changes in SQL Server environments is all-present on the IT scene. While some built-in replication mechanisms are available in SQL Server itself, most of them lack many important features, are poorly implemented, or have big flaws/limitations. Alternatively, ApexSQL Log, as our solution of choice, has a strong replication potential and capabilities. Similar to the recovery mechanism mentioned above, instead of creating a rollback script, ApexSQL Log can create a “replay” script which will mimic the change and create a script that can be executed on another database/table to keep them in sync. Pair this feature with the before-mentioned automation, and the replication solution is born.

Support plan

Auditing, as well as aforementioned recovery and replication, are tedious tasks, which leave no room for mistakes or setbacks. Even with the strong solution in place, environment hazards should always be taken into consideration and planning ahead for these moments, which can put entire production to a halt, has and will always be a prime concern for DBAs. So will the ability to keep any downtime at a minimum. Here, a strong support plan and availability of support tech is crucial to be able to get ahold of them promptly and ensure the resolution is quick and effective may be the one to tip the scale. Taking ApexSQL Log into consideration, support plans allow users 24/7, 365-day access to the support techs to jump in and assist with critical tasks.

Final words

As seen above, when choosing the best possible SQL Server transaction log reading solution, it is important to pay attention to the following:

- Ability to read from different data sources (logs, backups, LDF)

- Ability to audit different DML and DDL operations

- Ability to audit different SQL Servers across the network/domain from a central point and minimal production intrusion

- Ease of use and easy, yet powerful, analytical access to the audited data

- Multiple reporting options and QOL features

- Unattended auditing

- Bonus/additional features which open up new perspectives and add additional important transaction log reading based solutions like replication or recovery

While not all of these features will be mandatory/important to all those that intend to perform SQL Server transaction log reading, they do offer a strong foundation and advise on how to evaluate best potential solution for the auditing requirement at hand and decide on how to meet compliance requirements as well.

April 3, 2020